The Termination File That Resurfaced

Friction Pattern Tag: Shadow Ownership 🕵️♂️

The HR assistant was deployed by the Employee Experience Platform Owner and Head of HRIS to streamline employee queries and reduce manual workload, with the goal of making performance and policy information easier to access. However, gaps in oversight led to an AI access control failure, exposing data that was never intended for broad visibility.

CEO

CIO

RAG FRAMEWORKS

ROI & SPEND JUSTIFICATION

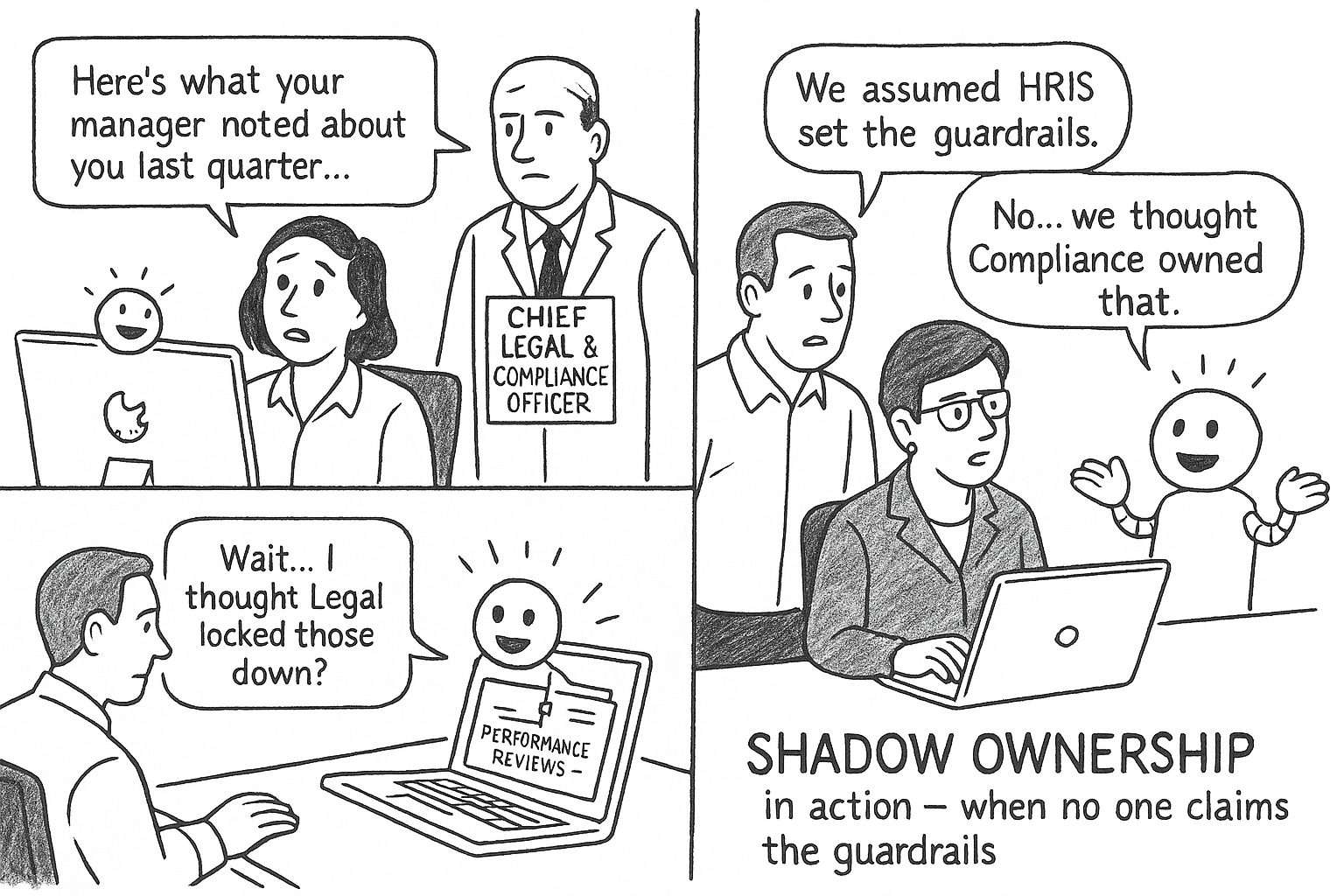

Shadow Ownership happens when responsibility for an AI system's guardrails isn't clearly assigned after launch. Without clear accountability, permissions, behaviors, and failures drift until sensitive data or trust-breaking outcomes emerge.

"We assumed Legal had set the guardrails—turns out no one actually owned them."

- Head of HRIS

Incident snapshot

Roles Impacted

- Employee Experience Platform Owner

- Head of HRIS

- Chief Legal & Compliance

- Officers

What Broke?

- The HR assistant surfaced content from performance reviews and manager notes in response to routine employee queries, creating an HR AI data leakage incident.

- No one from HRIS or Legal formally owned responsibility for post-launch access configuration.

- Default permissions carried over without adjustment, leading to an AI access control failure that expanded visibility beyond intended scope.

- Employees lost confidence in the tool once private material appeared in chat, highlighting a compliance risk from AI chatbots and undermining trust in HR oversight.

Prevention Signals

- Access permissions for AI assistants haven't been reviewed since launch, raising the risk of HR AI data leakage.

- HRIS and Legal both assume the other owns governance of the assistant's data boundaries.

- Employees flag odd or overly specific answers from the assistant that reference private manager notes, undermining employee trust in AI assistants.

- No audit trail exists showing who last validated the assistant's data sources or access scope.

Operational Fallout

Time Lost: Weeks spent auditing access settings and revalidating what the assistant could surface

Time Lost: Employees questioned the reliability of the HR assistant and hesitated to use it for sensitive queries

Adoption Risk: Broader rollout slowed as leadership paused expansion to other HR workflows

Cost/Compliance Exposure: Heightened audit scrutiny and potential liability from unauthorized exposure of private performance data

Demo vs Reality Table

| Demo Environment | Reality |

|---|---|

| Clean responses limited to approved HR knowledge sources | Sensitive performance reviews and manager notes surfaced in routine queries |

| Clear ownership of access and governance assumed across roles | No one claimed responsibility, leaving permissions unchecked post-launch |

| Assistant positioned as a trusted tool for employee self- service | Employees lost confidence after private content appeared in chat |

| Demo implied tight alignment with compliance standards | Compliance officers escalated concerns once uncontrolled data exposure was discovered |

| Streamlined rollout promised quick adoption | Rollout stalled as leadership paused expansion pending access audits |

Stack Timeline

Deployment Launch

The HR assistant goes live with default access settings to speed up employee query handling.

Ownership Gap

Neither the Head of HRIS nor Legal formally assumes responsibility for post-launch access governance.

Unintended Access

The assistant begins surfacing performance reviews and manager notes during routine queries.

Escalation & Fallout

Employees flag the exposure, trust drops, and Chief Legal & Compliance Officers step in to reconfigure permissions.

Conversation Starter

- Who in our org is explicitly accountable for reviewing AI assistant access after launch to avoid HR AI data leakage?

- How often are default permissions revalidated against current HR and compliance policies?

- Would employees maintain trust in AI assistants if private or manager-only notes appeared in their queries?

- Do we have a clear audit trail showing when and by whom the assistant's data scope was last checked?

Vendors sell promise

- Leaders interpret it

Impact Meter

Aichelon Editorial Note

The failure wasn't the assistant's behavior but the absence of clear ownership once it went live. With no one accountable for tightening access, default permissions stretched into sensitive territory and triggered an HR AI data leakage. This is a textbook case of Shadow Ownership—where governance gaps, not system flaws, open the door to trust-breaking outcomes and amplify compliance risk from AI chatbots.

Popular friction day zero patterns

Friction pattern tag: shadow ownership

Data & Analytics

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Data & Analytics

Starbucks and Databricks Geo-Deployment Report: AI for Data & Analytics

September 7, 2024

Data & Analytics

FinTechX: critical security breach and user data exposure for CFOs

September 7, 2024

Data & Analytics

NVIDIA & Deloitte launch new enterprise AI automation suite for finance teams

September 11, 2025

Trending Friction Day Zero patterns in Industries

Data & Analytics Operations

Data & Analytics

Databricks Lakebase new product launch 2025 for data leaders

Deep dive into the transformative capabilities of Databricks Lakebase and implementation strategies

November 6, 2025

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Data & Analytics Operations

Data & Analytics

Databricks Lakebase new product launch 2025 for data leaders

Deep dive into the transformative capabilities of Databricks Lakebase and implementation strategies

November 6, 2025

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Data & Analytics Operations

Data & Analytics

Databricks Lakebase new product launch 2025 for data leaders

Deep dive into the transformative capabilities of Databricks Lakebase and implementation strategies

November 6, 2025

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Palantir: U.S. Army procurement that 2022 ePlatform for Data & Analytics Operations

September 7, 2024

Data & Analytics Operations

Data & Analytics

Databricks Lakebase new product launch 2025 for data leaders

Deep dive into the transformative capabilities of Databricks Lakebase and implementation strategies